Multiple Inheritance vs. Traits or Protocol Extensions

A friend asked me at WWDC how Swift protocol extensions (or Scala traits, or Ruby mixins) are substantially better than multiple inheritance. Here are a few links and thoughts.

A coworker asked me at WWDC how Swift protocol extensions (or Scala traits, or Ruby mixins) are substantially better than multiple inheritance. Here are a few links and thoughts, and finally a description of how conflicts are handled in Swift 2…

This Stack Exchange answer has a good summary: the problem with multiple inheritance boils down to ambiguity. Review that answer to begin with.

I have a gut feeling that that ambiguity leads to worse consequences for variables than for methods, particularly since most of the languages I’m about to mention support something that looks like multiple inheritance for methods but not for variables. But I’m not totally sure why that is. (Tell me.)

This post about Traits in Scala explains the diamond problem (which the previous SE post alludes to):

Many programmers exhibit a slight, involuntary shudder whenever multiple inheritance is mentioned. Ask them to identify the source of their unease and they will almost certainly finger the so-called Deadly Diamond of Death inheritance pattern. In this pattern, classes B and C both inherit from class A, while class D inherits from both B and C. If B and C both override some method from A, there is uncertainty as to which implementation D should inherit.

Different languages solve this uncertainty in different ways. C++ implements a system in which developers must manually resolve conflicts by overriding each conflicted method (A’s entire interface) at the “bottom point” of the diamond (D in this case). The implementation in D has the ability to delegate to the implementation in B and to the implementation in C. Strangely, D will also have two complete copies of A’s fields: one for B’s methods to operate on and one for C’s methods. In practice, diamond inheritance in C++ usually ends up resulting in exceedingly verbose and (at least) slightly hard-to-understand code.

(From there the post delves deeper into Scala-Trait-specific stuff, so I’ll stop here. But you should read on, later, if you’re interested in this sort of thing.)

C++ is most notorious for multiple-inheritance-induced complexity. Many other popular languages—Java and C#, et al—solve the problem by not allowing multiple inheritance. Simple!

Still other languages—Ruby, Scala, now Swift, et al—use Traits, Mixins, or Protocol Extensions, which for the oversimplified purpose of this discussion are mostly interchangeable terms. Roughly speaking, these allow a programmer to define an interface or protocol which includes method implementations, and to apply one or more of those protocols to an existing type.

Clearly, a method defined in two traits (or protocols, or mixins) could conflict if the type they’re applied to doesn’t provide an implementation, and the languages solve this in different ways.

Scala solves it basically by saying “whichever trait you mixed in last, wins”. (It gets a little more complex, particularly with super calls, and the post I linked earlier describes this in more detail. But this description will work here.)

I don’t know offhand how Ruby solves it.

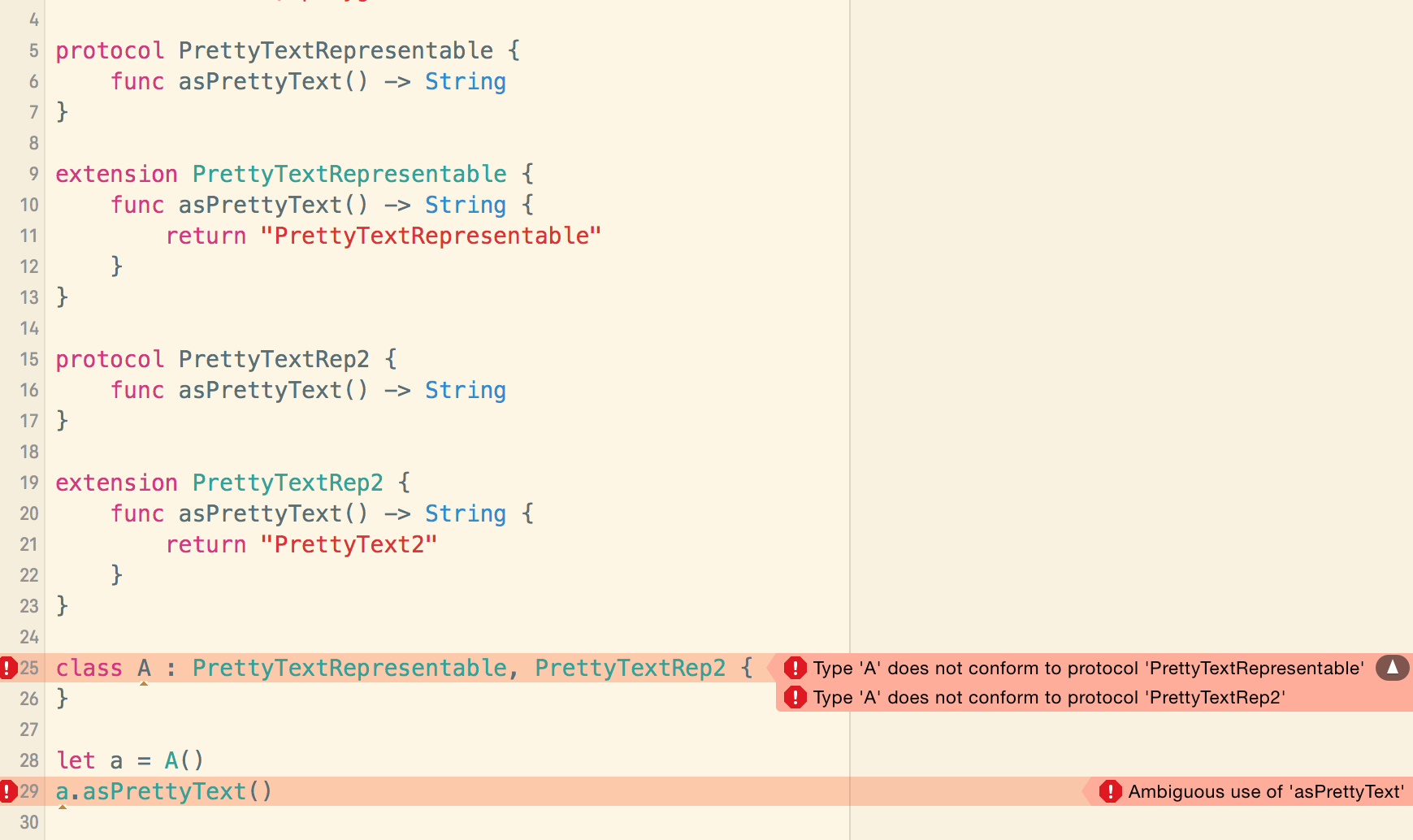

In Swift2, if you try to conform to two protocols which each provide an implementation for a method with the same name, the compiler will refuse to compile your code:

I think the ”Type … does not conform to protocol …” error messages could be improved, but then ¯\_(ツ)_/¯

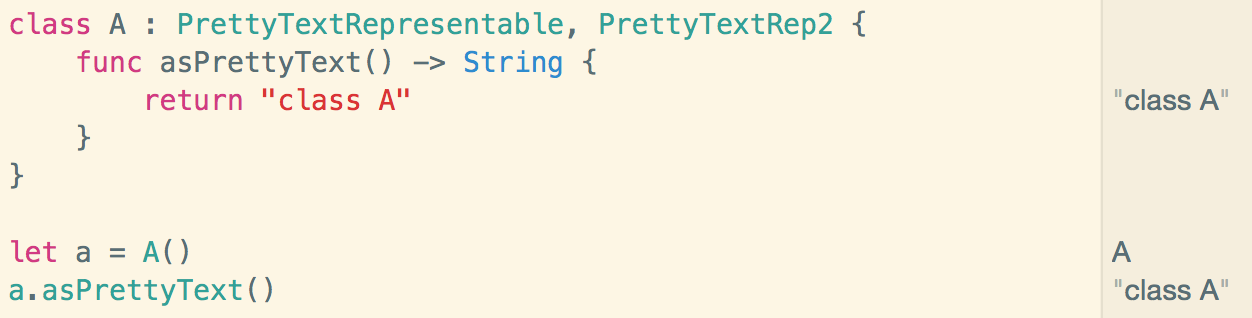

Of course, if you provide an implemention for the conflicting method, all is well:

Updates

Here’s some of the feedback/corrections I’ve received on Twitter:

@cdzombak if they are constrained protocol extensions, it uses a partial ordering based on the constraints. Otherwise, error.

— Doug Gregor (@dgregor79) June 16, 2015@cdzombak ruby also uses last-defined-wins, though there is hidden complexity in eigenclasses I shouldn't even have mentioned b/c monsters

— Bill Tozier (@Vaguery) June 15, 2015